The 10 Hottest Big Data Tools Of 2022

Data is an increasingly valuable asset for businesses and a critical component of many digital transformation and business automation initiatives. Here’s a look at 10 tools in the big data space, from cloud data platforms and next-generation databases to advanced data integration and transformation technologies, that really caught our attention in 2022.

Taking On The Big Challenges Of Big Data

In 2025 the total amount of digital data and information created, captured, copied and consumed worldwide will reach 181 zettabytes, up from 79 zettabytes in 2021, according to an estimate from market researcher Statista.

All that data presents opportunities for businesses who can find a way to “operationalize” it—such as by analyzing it and leveraging the generated insight for competitive advantage.

But that means having the right tools to manage that ever-growing wave of data. Global spending for big data products and services, not surprisingly, is expected to explode from $162.6 billion last year to $273.4 billion in 2026, according to a MarketsandMarkets report. And that means opportunities for solution providers.

This year has seen the debut of a number of hot next-generation big data tools, both from established companies and startups, that are setting the pace in data management. They include advanced databases, cloud data platforms, data integration and transformation tools, and software that helps businesses and organizations in specific vertical industries get the most out of data lakehouse systems.

Here’s a look at 10 big data tools that caught our attention in 2022.

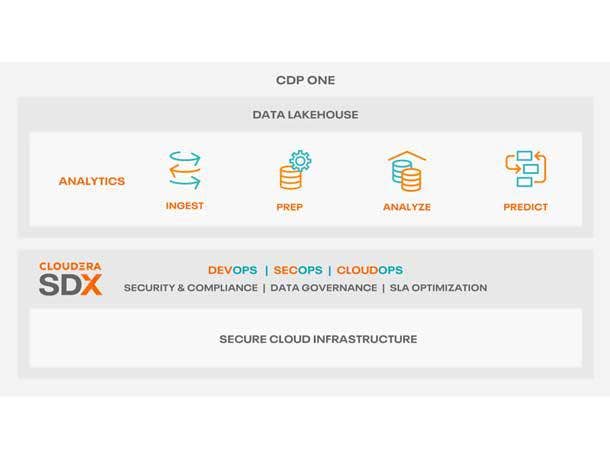

Cloudera Data Platform One

Cloudera Data Platform One is an all-in-one data lakehouse Software-as-a-Service offering that the company said makes it easier and less risky for businesses to move their big data workloads to the cloud and enable self-service business analytics and data science tasks.

Launched in August, the CDP One service has built-in cloud compute and cloud storage, machine learning, enterprise-grade security, low-code tools and streaming data analytics capabilities.

Nearly three-quarters of all organizations continue to run their business analytics and data workloads in on-premises systems due to security concerns and staffing needs, according to market researcher Ventana Research. Cloudera said the highly automated CDP One reduces the need for trained cloud, security and monitoring operations staff.

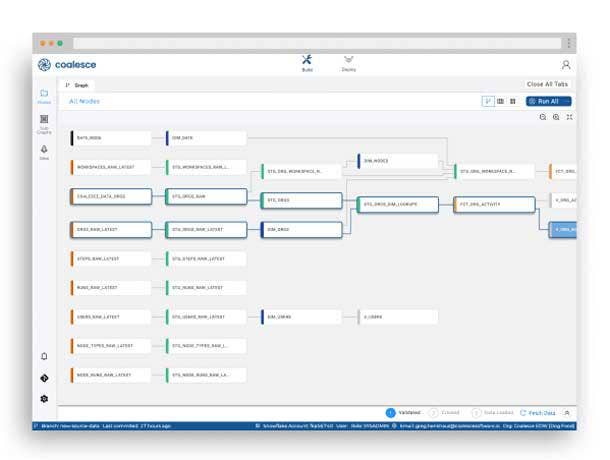

Coalesce

Data transformation is often the biggest bottleneck in the data analytics process, according to Coalesce. The Coalesce cloud-native data transformation platform is designed to make data transformation and building data pipelines easier while at the same time scaling up to support the biggest data warehouse systems in the world.

The company said traditional code-first data transformation tools limit scalability. Coalesce uses a “column-aware architecture” that enables data teams to build data warehouses with scalable, reusable and tested data patterns (the latter reusable steps in a data transformation pipeline that represent logical transformations), making data transformations more accurate, efficient, repeatable and verified at scale, according to the company.

Despite the technology’s advanced capabilities, Coalesce also touts the ease-of-use of its offering through an intuitive graphical user interface that bring its data transformation capabilities to a wider audience beyond data engineering programmers.

Coalesce is particularly focused on providing data transformation capabilities for the Snowflake data cloud with the ability to generate Snowflake-native code.

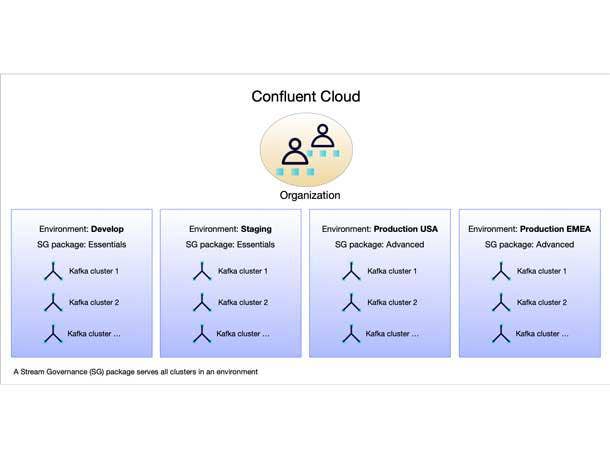

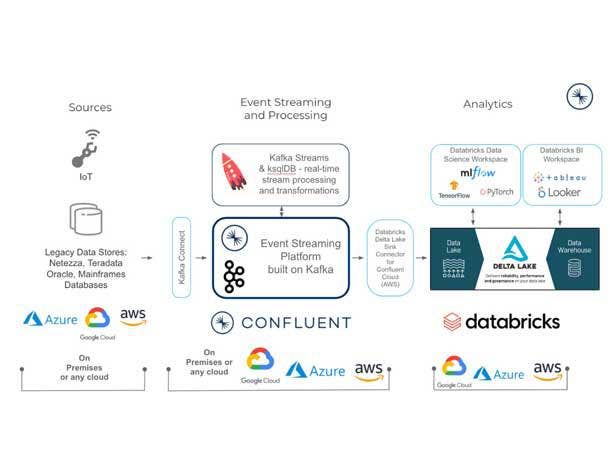

Confluent Stream Designer and Confluent Stream Governance Advanced

Confluent’s data streaming platform for “data in motion,” based on the Apache Kafka open-source platform, is becoming a critical component of many businesses’ big data operations, enabling real-time data from multiple sources to constantly stream throughout an organization.

In October Confluent unveiled Stream Designer, a visual development tool for building and deploying streaming data pipelines using the Confluent platform. With its point-and-click visual builder capabilities, Confluent said Stream Designer brings its capabilities to a wider audience of developers beyond specialized Kafka experts. That provides a major advancement in “democratizing data streams,” according to the company, allowing organizations to easily connect more data sources.

At the same time Confluent debuted Stream Governance Advanced, it introduced new capabilities to its suite of fully managed data governance tools including point-in-time data lineage insight, sophisticated data cataloging and global data quality controls.

Databricks Vertical Industry Applications

Through the first half of 2022 Databricks, a leading data lakehouse platform developer, launched a series of application packages targeted toward specific vertical industries that tap into the functionality of the Databricks Lakehouse Platform.

The new software included the Lakehouse for Financial Services, the Lakehouse for Media and Entertainment, the Lakehouse for Healthcare and Life Sciences, and the Lakehouse for Retail and Consumer Goods.

The Lakehouse for Financial Services, for example, provides customers in the banking, insurance and capital markets sectors with business intelligence, real-time analytics and AI capabilities. It includes data solutions and use-case accelerators tailored for industry-specific applications such as compliance and regulatory reporting, risk management, fraud and open banking.

The package for health care and life sciences addresses challenges in such data-intensive tasks as pharmaceutical development and disease risk prediction using tailored data, AI and analytics accelerators.

Through the Databricks Brickbuilder Solutions program, launched in April, the new software packages create opportunities for channel partners working within specific vertical industries.

Dbt Labs

Dbt Labs gained serious momentum in 2022 with its cloud-based data transformation workflow tools that help businesses and organizations build data pipelines and transform, test and document data within cloud data warehouse systems.

Dbt Cloud and Integrated Development Environment launched in 2019 followed by a series of technology enhancements and expansions, including Dbt Core v1.0 in late 2021. The Dbt Labs platform includes a development framework that combines SQL development and software engineering best practices such as modularity, portability, CI/CD and documentation.

Dbt Labs sees its technology playing a central role in the cloud data analytics “stack.” This year a number of players in the big data space established alliances with Dbt Labs and/or linked their products with the Dbt Labs platform and tools, including data lakehouse developer Databricks and cloud data analytics provider Starburst.

Dremio Cloud, Sonar And Arctic

The Dremio Cloud data lakehouse went live in March, providing a way for data analysts, data engineers and data scientists to tap into huge volumes of data for business analytics, machine learning and other tasks. The cloud-native service is based on the Dremio SQL Data Lake Platform, which includes a query acceleration engine and semantic layer, and works directly with cloud storage systems.

The Dremio Cloud lakehouse is available in a commercial enterprise edition that provides advanced security controls, including custom roles and enterprise identity providers, and enterprise support options. A free standard edition of the service is also offered.

At the same time as the Dremio Cloud launch Dremio debuted Dremio Sonar, a new release of the company’s SQL query engine that powers the cloud lakehouse system. The company also has offered previews of Dremio Arctic, a new metadata and data management service that will work with Dremio Cloud when it’s generally available.

EdgeDB and EdgeDB Cloud

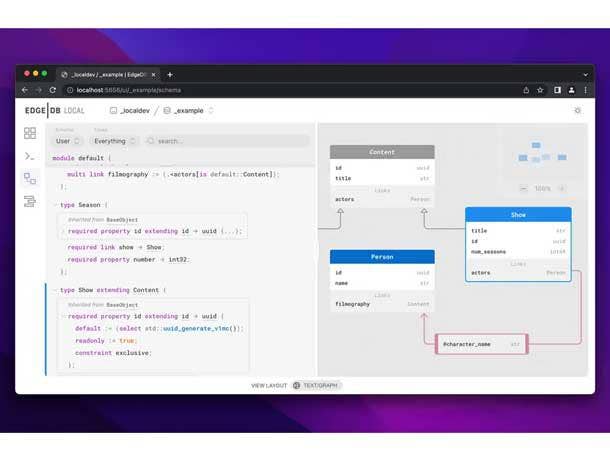

Startup EdgeDB has developed an open-source, graph-relational database with the not-so-modest goal of redefining legacy relational database software.

The company’s message is that relational databases and the SQL programming language are built on technology developed decades ago and are increasingly inadequate for today’s applications. The company calls its next-generation database “a spiritual successor to SQL and the relational paradigm.”

With the Postgres query engine at its core, the EdgeDB database (along with its EdgeQL programming language) incorporates graph schema database technology that stores data as objects with properties connected by links. The result is a hybrid graph-relational database that maps relationships among stored data more effectively than legacy relational database systems, according to the company.

Monte Carlo Data Reliability Dashboard

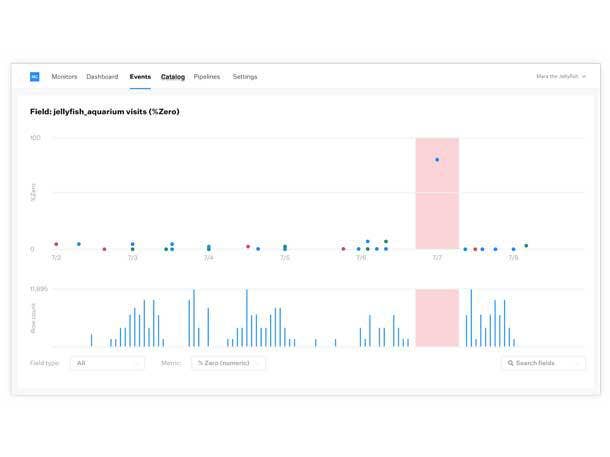

The Monte Carlo Data Reliability Dashboard, part of the company’s Data Observability Platform, helps data teams better understand the overall health of an organization’s data assets and communicate to key business managers the importance of having reliable data.

The dashboard, launched in October, provides data reliability KPI (key performance indicator) metrics around data distribution, volume, freshness, lineage and schema that help data managers observe trends and validate the progress of data reliability initiatives.

The dashboard includes metrics about time to detection and resolution of data incidents. It also offers data engineers an interactive data lineage map as they troubleshoot “data breakages,” including providing a unified view of affected data tables and their upstream dependencies.

And the dashboard is integrated with Microsoft Power BI, allowing data engineering teams to triage data incidents that specifically impact Power BI dashboards and users, as well as help ensure that changes to data tables and schema can be safely made.

Qlik Cloud Data Integration

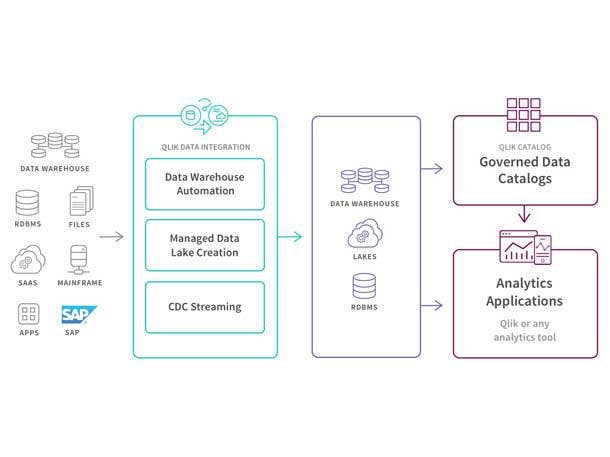

In November Qlik unveiled Qlik Cloud Data Integration, an enterprise integration Platform as a Service that the company said supports enterprise data strategies through a real-time data integration fabric that connects all applications and data sources to the cloud.

Qlik Cloud Data Integration is a set of SaaS services that the company, a developer of business analytics and data integration software, said is designed for analytics and “data engineers deploying enterprise integration and transformation initiatives.”

The services create a data fabric that unifies, transforms and delivers data—both on-premises and in the cloud—across an organization through flexible, governed and reusable data pipelines. The platform provides a common set of cloud services that integrate with Amazon Web Services, Microsoft Azure Synapse, Google Cloud, Snowflake and Databricks.

Qlik Cloud Data Integration capabilities include real-time data movement, advanced data transformation, data catalog and lineage automation, and API automation.

Snowflake Unistore

At the Snowflake Summit 2022 conference in June data cloud service provider Snowflake debuted Unistore, a new service that processes transactional and analytical data workloads together on a single platform.

Unistore, according to Snowflake, simplifies and streamlines the development of transactional applications and provides consistent data governance capabilities, high performance and near-unlimited scalability.

While transactional and analytical data are traditionally maintained separately, Unistore expands Snowflake’s data cloud to include transactional use cases. At the core of Unistore is Hybrid Tables, new technology that includes single-row operations and allows users to build transactional applications directly on Snowflake and quickly perform analytics on transactional data.

Snowflake also debuted the Native Application Framework that customer and partner developers use to build data-intensive applications, monetize them through the Snowflake Marketplace, and run them directly within their Snowflake instances, reducing the need to move data.

Unistore and the Native Application Framework are key products for Snowflake’s plans to expand beyond its data management and data analytics roots into the realm of operational applications.