Nvidia GTC: Hopper GPUs, Grace CPUs, Software Debut With AI Focus

'For AI training, H100 will offer four petaflops of performance, six times more than even the A100 [GPU]. It will also offer three times higher performance for a range of other precisions, including double precisions needed by some of the HPC applications. H100 will be the first product with HBM3 (high bandwidth memory) memory, and will deliver an astonishing three terabytes per second of memory bandwidth,’ says Paresh Kharya, Nvidia’s senior director of data center computing.

Nvidia Tuesday used its virtual Nvidia GTC conference to unveil a new accelerated computing platform named for pioneering computer scientist Grace Hopper.

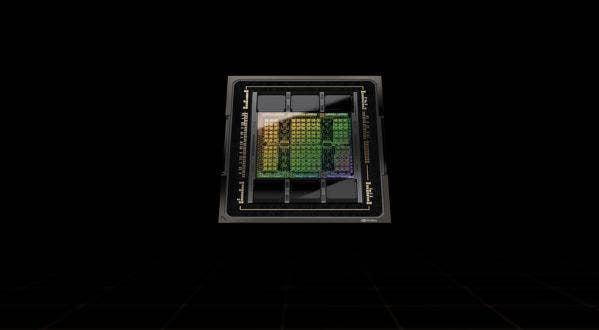

Along with the new Nvidia Hopper architecture, which succeeds the current two-year-old Nvidia Ampere architecture, the Santa Clara, Calif.-based company also introduced the Nvidia H100 GPU, the first GPU based on the Hopper platform.

Nvidia also introduced the Grace CPU Superchip, the company’s first new data center CPU based on the Arm Neoverse design, and also introduced major updates to its Nvidia AI platform.

[Related: 6 Boldest Statements From Jensen Huang And Eyal Waldman On Nvidia-Mellanox Deal]

Nvidia remains dedicated to providing technology to meet the demanding requirements of AI-focused applications, said Paresh Kharya, senior director of data center computing at Nvidia, during a pre-GTC press conference.

“AI has transformed every industry, and the use cases of AI are everywhere from image classifications to recommendation systems to natural language understanding,” Kharya said.

As an example, Kharya cited Google Transformer, a neural network architecture for deep learning for such areas as natural language processing and computer vision.

“[Google] brought unsupervised learning, and removed the need for label data,” he said. “This dramatically expanded the volume of data that could be used to train AI models. With Transformer models, AI learned natural human language. And today it's powering conversational AI applications everywhere. Transformers also helped AI learn other complex tasks like predicting the structure of proteins from the sequence of amino acids.”

With Transformers, models grow in size and complexity as they become more accurate while learning via enormous amounts of unlabeled data, leading to increasing computing power to manage the loads.

To enable hardware to keep up with the performance requirements of such AI workloads, Nvidia Tuesday introduced what it called the next engine for AI infrastructures, the Nvidia Hopper GPU architecture and the Nvidia H100 GPU, which Kharya said were named after pioneering computer scientist and U.S. Navy vice admiral Grace Hopper.

The H100 GPU features 80 billion transistors on a single chip, and was manufactured by advanced semiconductor manufacturer TSMC using a 4-nanometer process. The H100 also includes a new Transformer engine aimed at accelerating Transformer modeling by six times over previous architectures. Its fourth-generation NVLink accelerates PCIe performance by seven times over previous versions, and allows it to work with systems outside the server, he said

Hopper is also the world's first confidential computing GPU, a technology that previously was only available in CPUs, Kharya said. Confidential computing encrypts data while in use to protect it from unauthorized access, he said.

Hopper also features the next generation of multi-instance GPU technology, which was originally introduced with Ampere to fractionalize and isolate a GPU into many instances, along with new instructions to accelerate dynamic programming to break complex problems into simpler sub problems.

Performance in AI and other advanced workloads is significantly increased with the Hopper platform, Kharya said.

“For AI training, H100 will offer four petaflops of performance, six times more than even the A100 [GPU],” he said. “It will also offer three times higher performance for a range of other precisions, including double precisions needed by some of the HPC applications. H100 will be the first product with HBM3 (high bandwidth memory) memory, and will deliver an astonishing three terabytes per second of memory bandwidth. It'll also support PCIe Gen 7 and the fourth generation of Nvidia NVLink.”

Also new are the first systems to feature the Hopper architecture, the Nvidia DGX H100.

A single DGX H100 system will provide 32 petaflops of AI performance, which Kharya said is six times more than the previous DGX A100. By using the NVLink, enterprises can create a 32-node DGX Superpod with one exaflops of AI performance, with a bisection bandwidth of 70 terabytes per second, or 11 times higher than a comparable DGX A100 Superpod.

“The bandwidth of the entire Internet is 100 terabytes per second,” he said. “So you can imagine the amount of data flowing through.”

The company also introduced the Nvidia EOS, a new supercomputer built with 18 DGX H100 Superpods featuring 4,600 H100 GPUs, 360 NVLink switches and 500 Quantum-2 InfiniBand switches to perform at 18 exaflops.

“We expect it to be the world's fastest AI supercomputer when it’s deployed,” he said. “Because it uses Nvidia's Quantum-2 technology. It’ll also be a cloud native supercomputer. EOS will provide bare metal performance with multi-tenant isolation, as well as performance isolation to ensure that one application does not impact any other. And finally, the third generation of SHARP (Scalable Hierarchical Aggregation and Reduction Protocol) technology will ensure that in-network computing is available in multi-tenant environments.”

The H100 and its systems will provide a new level of performance for AI analytics, Kharya said.

“The H100 will offer up to nine times higher performance [than previously available],” he said. “Training in days, what used to take weeks. And it'll also enable the next generation of advanced data models to be created because they’ll be within the reach for the practical amount of time it takes to train them. And when these models are deployed for inference, H100 will offer an incredible 30 times more throughput.”

The H100 platform will also be available on mainstream servers via the new H100 CNX Converged Accelerator, Kharya said.

Current mainstream servers are limited in how much data they can feed to powerful GPUs, and high bandwidth situations can overload a CPU and system memory, he said.

“H100 CNX combines our most advanced H100 GPU with the most advanced ConnectX-7 NIC (network interface card) into a single module that can be deployed in mainstream servers via the PCIe connection to the CPU,” he said. “Data from the network uses GPU Direct RDMA technology, and it can completely bypass the CPU bottlenecks. In a mainstream server with four GPUs, H100 CNX will boost the bandwidth to the GPU by four times, and at the same time freeing up the CPU to process other parts of the application.”

H100 and related systems will be available starting in the third quarter of 2022, Kharya said.

One custom system builder, who spoke to CRN on condition of anonymity, said customers have been waiting for the debut of the H100 GPUs for deployment in their data centers.

“Customers are looking at the latest and greatest for their HPC and AI compute needs,” the system builder said. “They’ve been waiting to hear what content will be available, pricing, and what systems they will be available for.”

The Hopper platform will also power NVidia's Grace CPUs which the company unveiled last year, Kharya said. The Grace CPU Superchip, the first Arm-based CPUs from Nvidia, are based on the Arm Neoverse-based discrete data center CPU designed for AI infrastructure and high performance computing. The Nvidia Grace CPU Superchip includes two CPU chips connected over NVLink-C2C, a newly-introduced high-speed, low-latency, chip-to-chip interconnect, he said.

Hopper is also powering Nvidia's Grace Hopper accelerated CPU module, the company’s first data center CPU purpose-built for giant-scale high performance computing and AI, Kharya said.

“It has a breakthrough coherent chip-to-chip interconnect that enables us to create a super chip by connecting the Grace CPU with the Hopper GPU at 900 gigabytes per second,” he said. “We're talking about two really powerful chips connected together. This allows us to provide 30-times higher system memory bandwidth to the GPUs in a server compared to DGX A100.”

The custom system builder told CRN that customers have been waiting for the Grace CPU since it was first revealed last year.

“We were told it would be available in 2022, and we’re still waiting,” the system builder said. “Most of our customers still use Intel and AMD processors. ARM-based processors will likely be used for more specific applications.”

Nvidia also made some big additions to its AI software stack.

Nvidia is unique in its full-stack AI, the foundation of which is an accelerated AI infrastructure available in all public clouds, said Eric Pounds, senior director of enterprise computing for the company.

“At the top of the stack are AI skills to help enterprises and organizations solve some of the world's toughest challenges,” Pounds said. Within each of these skills and beyond, there’s a thriving ecosystem to create many AI-powered solutions for a broad range of enterprises and organizations.“

Nvidia at GTC unveiled Nvidia AI accelerator program, which helps Nvidia software and solution ecosystem partners build and deliver optimized applications on top of the Invidia AI platform, Pounds said. The Nvidia AI Accelerator program has over 100 members at launch, he said.

Nvidia also introduced three new Nvidia AI solutions.

Nvidia AI Enterprise is a software suite that enables organizing to harness the power of AI, even if they do not have AI expertise today, with proven open source containers and frameworks. With Nvidia AI Enterprise 2.0, the software is certified to run on common data center platforms from VMware and Red Hat, including virtualized environments, bare metal environments, and environments orchestrated for their modern cloud native workloads, Pounds said.

“And they leverage mainstream Nvidia-certified servers configured with GPUs or with CPUs only,” he said. “So, yes, this is the first time we’re going to be supporting Nvidia AI Enterprise on CPU-only systems. And the software will also run across all major public clouds. ... With Nvidia AI enterprise software, AI is accessible to organizations of any size, providing the compute power, tools, and support so they can focus on creating business value from AI versus managing infrastructure stacks.”

The second is an enhancement to Nvidia RIVA, the company's accelerated speech software development kit, Pounds said. The new version, Nvidia RIVA 2.0, which is available now, provides automatic speech recognition in seven languages, human-like text-to-speech with professional male and female voices, and custom fine tuning with the Nvidia Tao toolkit.

The third is Invidia Merlin 1.0, an accelerated end-to-end recommender framework for data science and machine learning engineers to help them quickly build, optimize, and deploy high performing recommenders at scale, Pounds said. It is currently available.